Content Navigation

What’s Wrong With Traditional Estimating Techniques

So, How Does Quality Based Costing Work?

Using QBC For Competitive Advantage

Why Schedule Risk Analysis is flawed – and how to fix it

Schedule Risk Analysis (SRA) is becoming a common tool to analyse the timescale risk on programmes. However, the way it is implemented tends to be fundamentally flawed to the point where the results are totally misleading and the process is worse than just being a waste of time. But there is a better way. Firstly we need to look at How SRA works.

How Does SRA work?

Essentially, SRA works by extracting the latest baseline plan from the planning tool, often MS Project, and then interfacing with a Monte Carlo package. This allows the planner to add minimums and maximums to the “mean”, which is effectively the current plan duration.

What goes wrong with this?

The most fundamental problem with typical SRAs is that the plan that is used has already been “squeezed” to fit the required delivery dates. Consequently when the Monte Carlo analysis is run, it simply uses the (squeezed) mean data values and spreads around the minimum and maximum dates and therefore gives a result which far more optimistic than reality- strangely enough it almost aways in the order of 80% confidence!

Also the result is often provided in isolation ie the percentage confidence with no guidance of what to do to make it better – so it frequently fails the “so what” test. Sometimes reasons are given by referencing risks that have been identified completely separately from the SRA process. Whilst this is better than nothing, at best there will a lot of guesswork to establish what risks impact where and by how

much.

At a more detailed level, SRAs generally require that mins and max are applied to all activities and this could mean thousands. The effect of this is that the job tends to get rushed or delegated to the planner who, whilst understanding the logic of the plan, does not understand the detail of what is planned. Either way the effect is that data is mostly wild guesswork.

Also for each min or max, a probability of this occurring must be provided. Again this is likely to be pure guesswork and again input by someone who doesn’t have the insight or someone who doesn’t have the time to think this through for hundreds or thousands of activities.

Applying risk management to an ongoing programme to ‘rescue’ it is quite a common occurrence, it is however, clearly not the best way to operate. The problem is that the programme has already gone wrong and so rescuing it is too late, and this is often due to the fact that the estimating assumptions made when the projects were in the scoping/proposal stage were fundamentally incorrect, if this is

the case, you’re just asking for trouble further down the line. This inaccuracy during the scoping/proposal stage often leads to insufficient budgets, shortcuts and damages business relationships that ultimately undermine the project, leading to delays , scoping reductions and even total project or programme failure, which is the last thing you want to run into after all your hard work.

So What’s Wrong With Traditional Estimating Techniques?

Traditional estimating approaches take ‘good quality’ estimates (e.g. capital assets) and ‘poor quality’ estimates (e.g. resource estimates for activities never attempted before) and simply add them together to produce an ‘add-up cost’ which conceals the uncertainties (i.e. the risk). Further, if there uncertainties are now lost in the add-up cost, there is no way of re-analysing them and therefore no way of managing the cost risk by directly addressing the uncertainty risks.

What Can Be Done To Improve This?

Quality based costing (QBC) is a proven way of accurately estimating the cost risk in any size of programme. The term ‘cost risk’ in this context could be pure cost, timescale or even benefit realisation. It essentially works by capturing the inevitable quality variations in the estimates and underpins all estimates with their underlying assumptions.

So How Does Quality Based Costing Work?

Quality based costing starts by first identifying the strategic cost ‘bricks’ in the project. The term brick is used to avoid confusion with work packages, activities, tasks, etc. and the size of a brick can vary considerably depending on the stage of project. The first step is to identify all the cost bricks and therefore build the ‘brick wall’. When this is complete, the total cost structure of the project is represented, with no estimates at this stage. Brick owners are allocated for each brick as accurately as possible. Brick owners are then interviewed to ‘breakdown’ the brick estimates into its components. Do this by asking structured questions that break the brick down into:

A = Absolute minimum (what would be the estimate if everything went perfectly?)

A + B = Best Guess/Realistic estimate (What would a single point estimate be -with no added contingency?)

A + B + C = Contingency Added (What would need to be added to make the estimate ‘comfortable’?)

A + B + C + D = Disaster Scenario (Is there an unlikely situation where things go very badly wrong?)

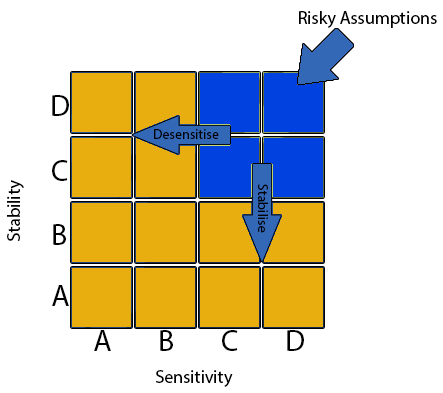

The assumptions that underpin the estimates are also captured using the ABCD (Assumption Based Communication Diagram) assumption analysis process – see the below image. The key factor here is that the rating of the assumptions must be consistent with the estimate breakdown, and the interview often results in challenges to the estimates and/or assumptions to make them mutually consistent. One significant benefit is that inappropriate contingency will be stripped out and required contingency will be maintained or added in.

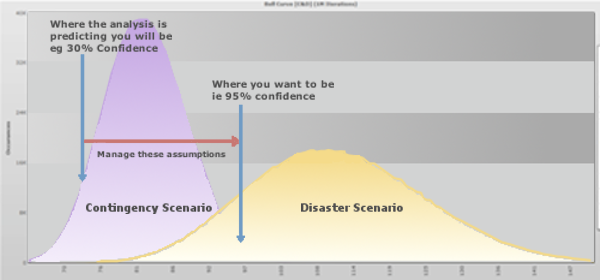

Each brick then has two probability distributions built around the estimates – one for the ‘contingency scenario’ and one for the ‘disaster scenario’, as shown in the diagrams below:

Monte Carlo analysis is a technique used to understand the impact of risk and uncertainty in financial, project management, cost, and other forecasting models. In the case of accurately quantifying risk, Monte Carlo analysis is a standard technique for adding together probability distributions and is therefore used to add the bricks statistically .

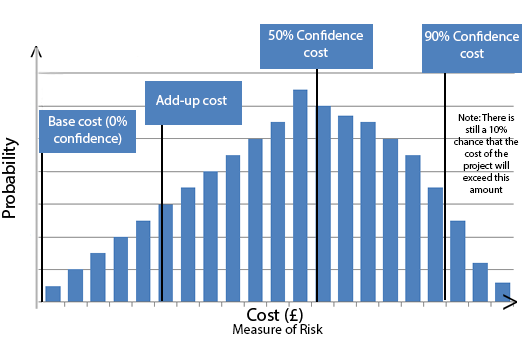

The resulting probability distributions – see the figure below – can be interpreted to make crucial decisions relating to budgeting, pricing or milestones. For example:

- There is a ‘zero’ probability of the project costing less than the ‘base cost’.

- The 50 per cent confidence cost means that there is a 50-50 chance of the project costing less or more than this value.

- The 90 per cent confidence cost is normally considered to be the ‘ideal’ cost to budget (if this is considered affordable).

- To be meaningful, the project must be funded somewhere between the 50 per cent and 90 per cent costs.

- The add-up cost is simply the value that would have been reached through ‘traditional’ estimating.

- The add-up cost could appear anywhere on the graph but experience has shown this to be consistently below the 50 per cent point – is it therefore not surprising that traditional estimating is so far out?

Using QBC For Competitive Advantage

QBC is most effectively used at the proposal stage of a project or programme to provide the best possible information for competitive pricing and to give confidence that crucial milestones, targets and objectives will be met. In non-competitive environments, it provides a scientific way of guaranteeing fair budgets and profit. In competitive situations, it allows suppliers to fully understand the level of risk that they are taking on if they choose to cut their price or timescales for strategic reasons, whatever they may be. However, this strategy also allows for innovative pricing scenarios, which can produce the most aggressive (fixed) price but with the added bonus of reduced risk to the supplier.

Using QBC For Competitive Advantage

- Identify all bricks where a client dependency is driving the uncertainty.

- Take out the C & D components for these bricks and rerun the simulations.

- The difference between the profiles allows a fixed price and a ‘contingency’ to be negotiated.

- Capture and quantify the value of these assumptions in the contract.

- Rerun the simulation periodically (e.g. at each major milestone).